Some LGO students dive deep into scientific research topics and work in a company as an R&D intern. These projects use cutting-edge technologies to solve unique problems or create new discoveries. They use engineering research practices and find ways to apply those discoveries in a business context. The result is often a new product or design.

Automation of NC Programming With Artificial Intelligence

Michael Lunny (LGO ‘22)

Engineering Department: Aeronautics and Astronautics

Company: American Industrial Partners (AIP)

Location: Kansas City, MO

Problem: AIP portfolio company Orizon manufactures metallic structural assemblies for various hardware systems, but primarily aircraft. These assemblies, fabricated using subtractive manufacturing methods on CNC machines, are often produced in high volumes, thus necessitating minimal machine time. A typical Orizon product has complex geometryrequiring several months of engineering effort to develop the program governing the CNC machine operation. This development process, referred to as programming, is a bottleneck that precludes Orizon’s operational efficiency and penetration to new markets.

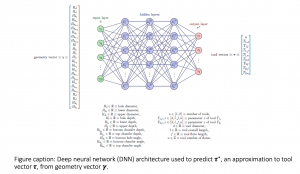

Approach: Michael worked on two solution approaches. The first approach is Geometry-Rule-based Automation of Programming (GRAP), which is a rule-based system with the ability to recognize hole and pocket features and automatically create an associated program. The second approach is Deep Learning for Automated Tool Selection (DLATS), which is a machine learning algorithm with the ability to select the appropriate cutting tool for a hole drilling process.

Impact: Using these approaches, Michael developed novel methods to help reduce typical part programming time, and by extension the onboarding time, which has a substantial impact on Orizon’s business in multiple ways. Most notably, with improved onboarding processes, Orizon will require less non-recurring engineering costs associated with developing machine programs. Additionally, because programming is a bottleneck process, improved operational efficiency will increase production and allow Orizon to take on more contracts and capture higher revenues. Furthermore, by investing in world-class machining assets and adopting a data-intensive strategy, Orizon has positioned itself as a market leader.

Enabling Commercial Autonomy in Aviation: An Ontology and Framework for Automating UAS

Juliette Chevallier (LGO ’21)

Engineering Department: Aeronautics and Astronautics

Company: Boeing

Problem: At Boeing, alignment across a program early in the ideation and development cycle is critical to success and saving time early on can allow more of the budget to fund actual product development. Autonomy is a new and complex topic that many individuals approach with preconceived notions, which can be a hurdle when beginning a new program, leading to misalignment and lost time. This project aimed to provide a clear guide for program managers to manage new commercial programs with a significant autonomy requirement.

Approach: Juliette’s guide included both an ontology as well as a framework for understanding the increased operational risk associated with a particular autonomous functionality. For the ontology, she used object process methodology (OPM) to model and initially validate the against the metrics of completeness, unambiguity, and congruity. For the creation of the proposed framework, Juliette performed an extensive literature review across different domains, where over 20 separate frameworks were considered. The final framework model was validated by applying it to 13 use cases, both real and theoretical.

Impact: Internally, the ontology and framework developed by Juliette provides program managers a series of concept definitions and relationships that they can choose to implement or adapt as they see fit. Externally, the ontology and framework can be used to describe a particular concept of operations to a regulator. Additionally, the framework may be used to analyze competitors’ efforts and see where other parties are accepting uncertainty. This can further inform the areas of product development for Boeing.

Assessing the opportunity to produce Nitinol medical device components using additive manufacturing

Arvind Kalidindi (LGO ’19)

Engineering Department: Materials Science and Engineering (PhD)

Company: Boston Scientific

Location: Clonmel, Ireland

Problem: The strength and elasticity of Nitinol (a nickel-titanium alloy) make it an important alloy for medical device applications. Nitinol is hard to manufacture cost-effectively while maintaining its superelastic properties. Boston Scientific sought to leverage Arvind’s PhD-level expertise in additive manufacturing to explore 3D printing as a new way to manufacture Nitinol effectively.

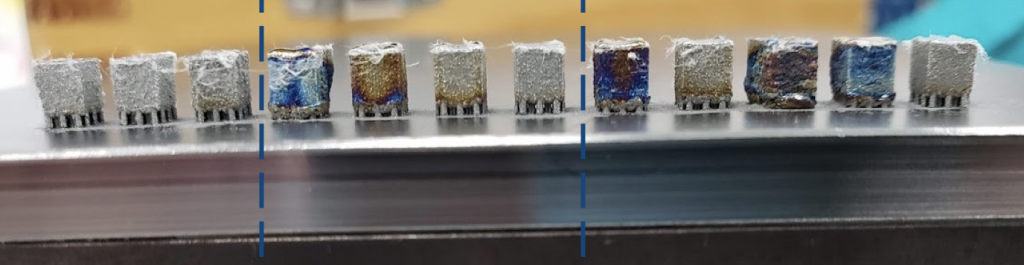

Approach: At Boston Scientific’s plant in Clonmel, Ireland, Arvind printed a hundred Nitinol samples to conduct a design of experiment procedure to optimize for desired part characteristics. Arvind identified loss of nickel during printing as the key engineering challenge, and created a cost and engineering model for addressing these challenges during future 3D printing operations.

Impact: Arvind found that 3D prototyping and printing of millimeter-sized components would be a cost-effective way to leverage additive manufacturing in the short term, showing distinct advantages over conventional methods such as micromachining.